|

IPCC

1.0

|

|

IPCC

1.0

|

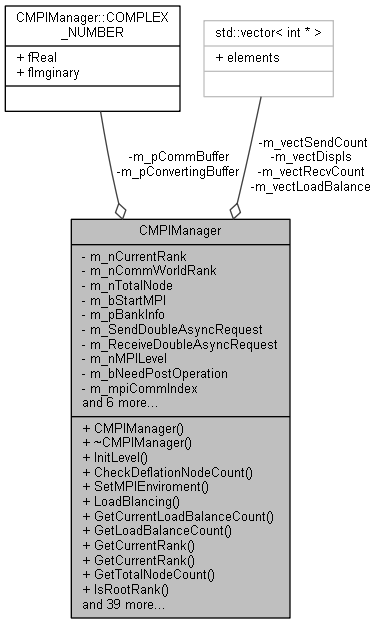

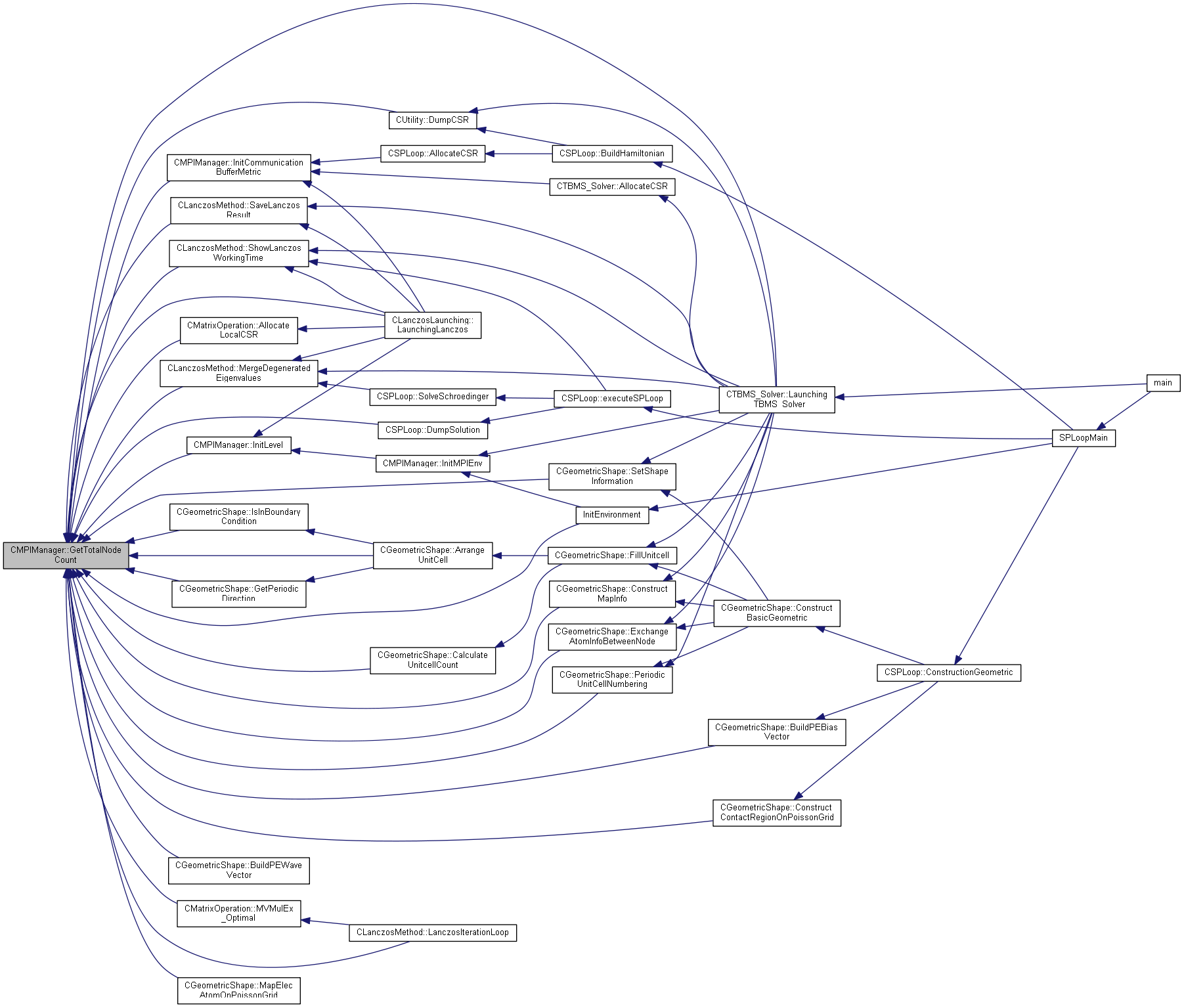

MPI Mangement class. More...

#include "MPIManager.h"

Classes | |

| struct | COMPLEX_NUMBER |

| Complex number. More... | |

Public Member Functions | |

| CMPIManager () | |

| Constructor. More... | |

| ~CMPIManager () | |

| Destructor. More... | |

Static Public Member Functions | |

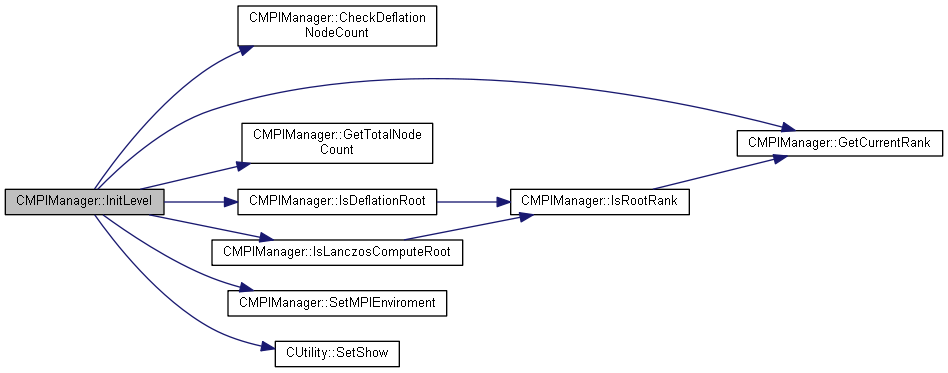

| static bool | InitLevel (int nMPILevel, int nFindingDegeneratedEVCount) |

| Init MPI Level, most low level is for multi node cacluation for Lanczos. More... | |

| static bool | CheckDeflationNodeCount (int nNeedNodeCount) |

| Checking node counts fix to deflation group. More... | |

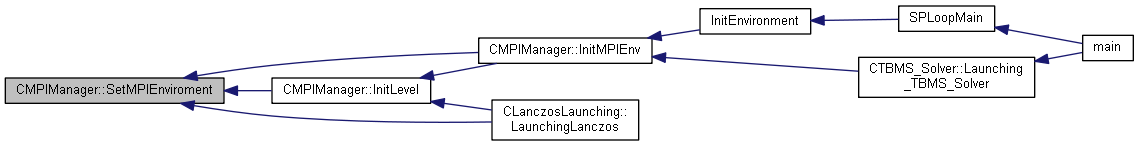

| static void | SetMPIEnviroment (int nRank, int nTotalNode) |

| Set MPI Enviroment. More... | |

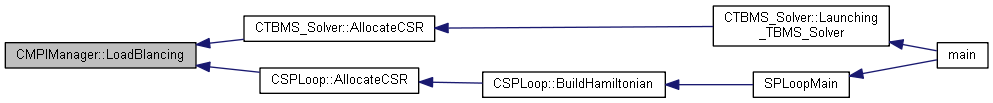

| static void | LoadBlancing (int nElementCount) |

| Load blancing for MPI, This function for lanczos solving with geometric constrcution. More... | |

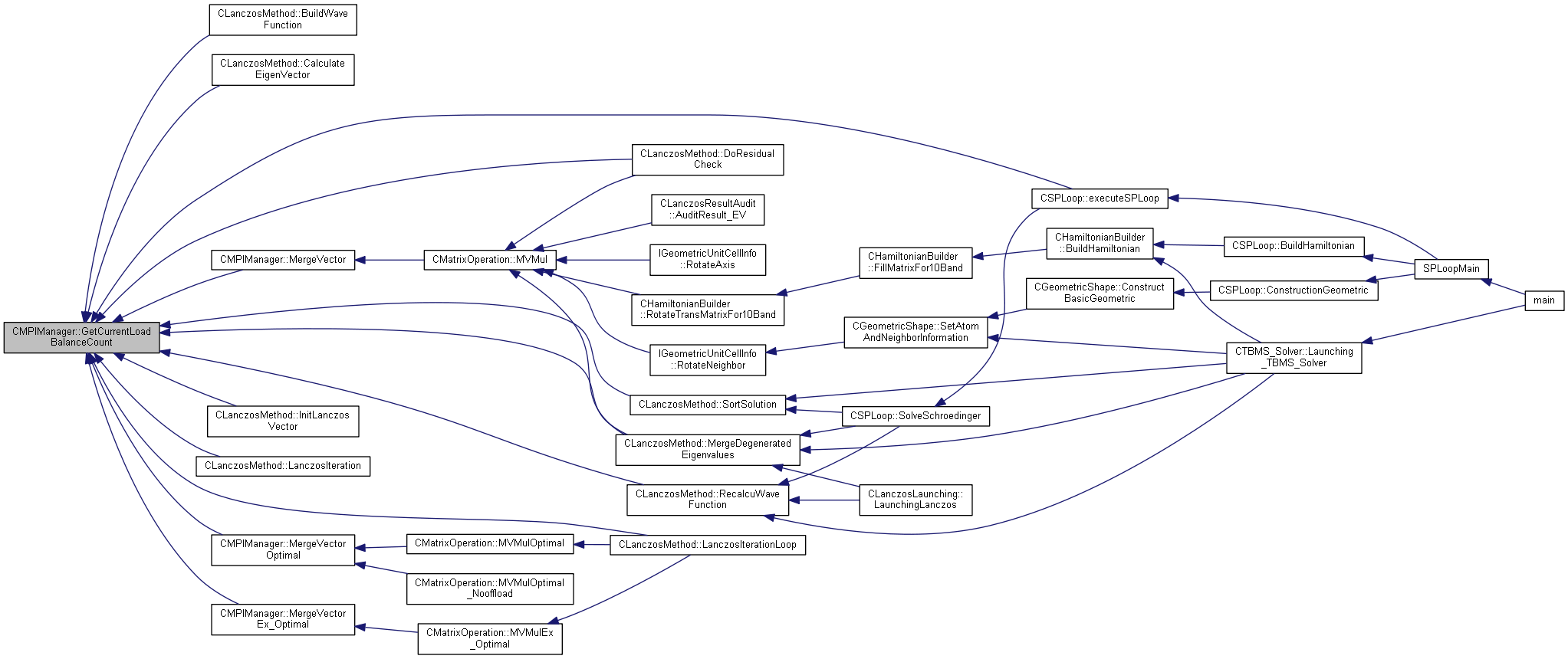

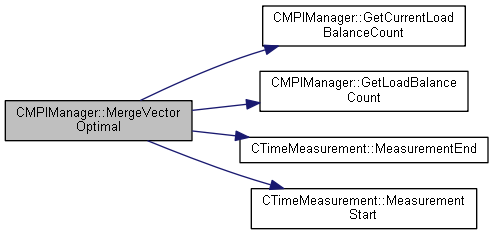

| static int | GetCurrentLoadBalanceCount (int nLBIndex) |

| Get Current node's rank load balancing number. More... | |

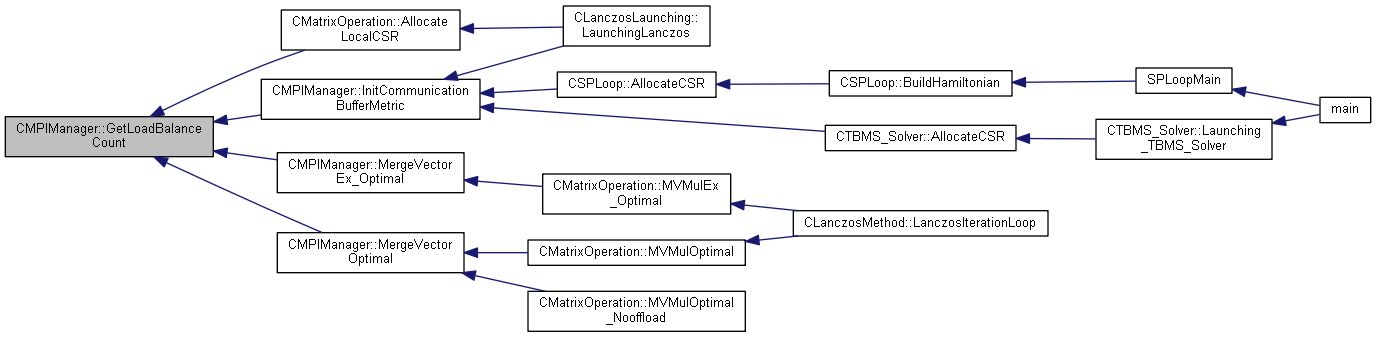

| static int | GetLoadBalanceCount (int nRank, int nLBIndex) |

| static int | GetCurrentRank () |

| static int | GetCurrentRank (MPI_Comm comm) |

| Get Current node's rank number. More... | |

| static int | GetTotalNodeCount () |

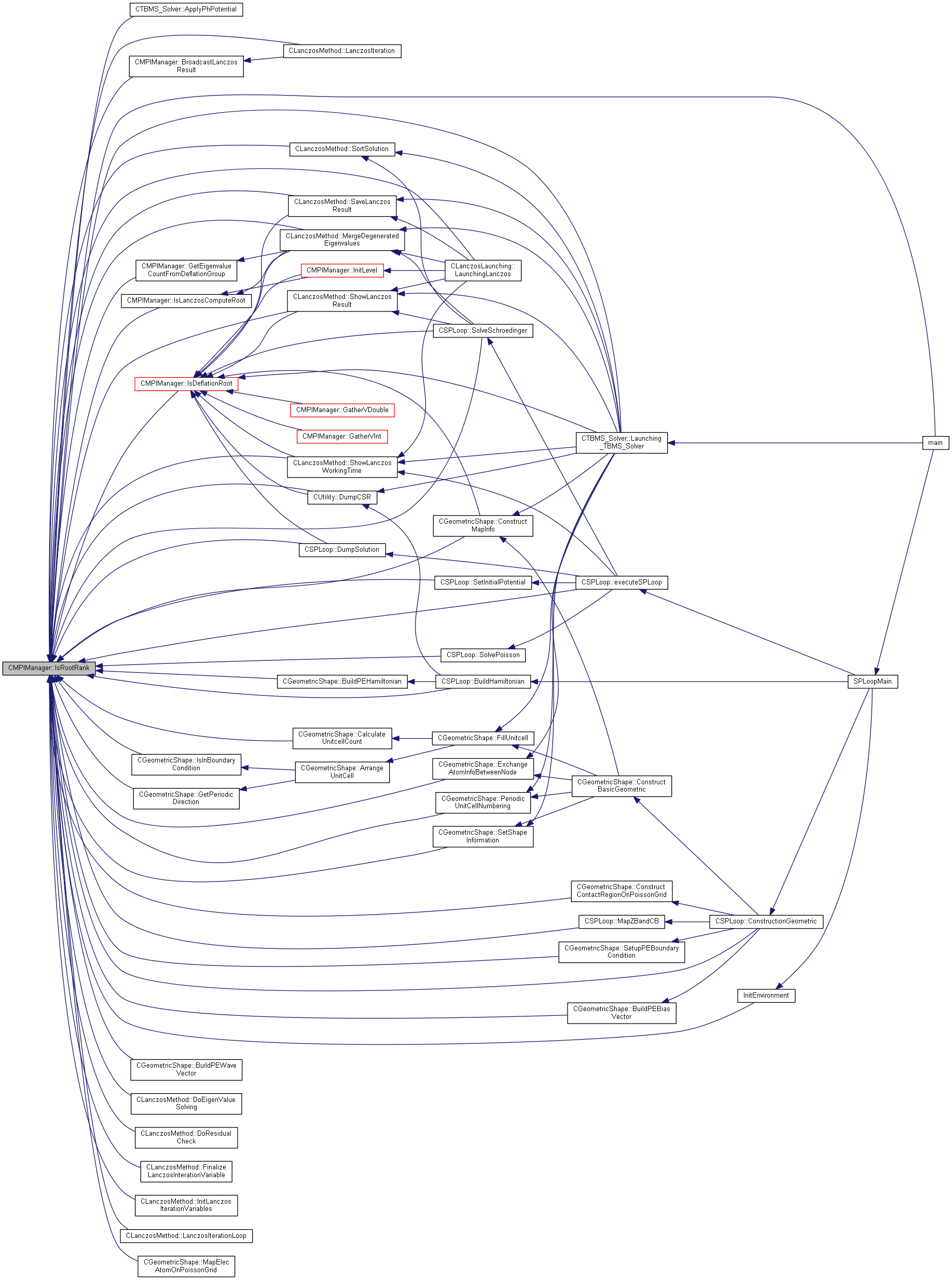

| static bool | IsRootRank () |

| Get Total node count. More... | |

| static bool | IsRootRank (MPI_Comm comm) |

| Check this node is root rank in 'comm' MPI_Comm. More... | |

| static bool | IsInMPIRoutine () |

| static void | BroadcastVector (CMatrixOperation::CVector *pVector) |

| Check this processing running on MPI Enviorment. More... | |

| static void | BroadcastBool (bool *boolValue, int nRootRank=0) |

| Broadcst boolean value. More... | |

| static void | BroadcastDouble (double *pValue, unsigned int nSize, int nRootRank=0, MPI_Comm comm=MPI_COMM_NULL) |

| Broadcst boolean value. More... | |

| static void | BroadcastInt (int *pValue, unsigned int nSize, int nRootRank=0, MPI_Comm comm=MPI_COMM_NULL) |

| Broadcst boolean value. More... | |

| static void | BroadcastLanczosResult (CLanczosMethod::LPEIGENVALUE_RESULT lpResult, int nIterationCount) |

| Broadcast Lanczos result. More... | |

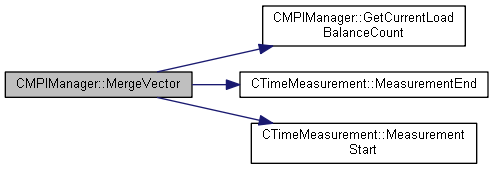

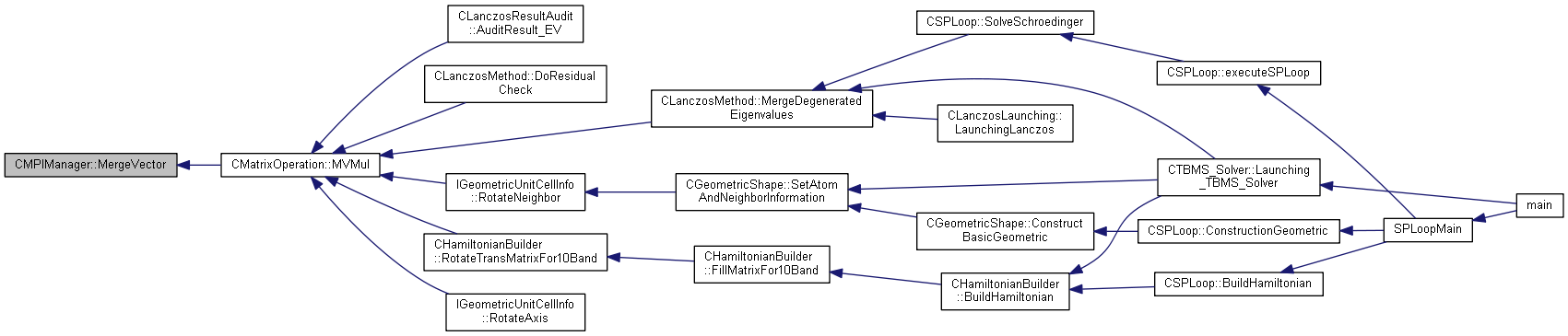

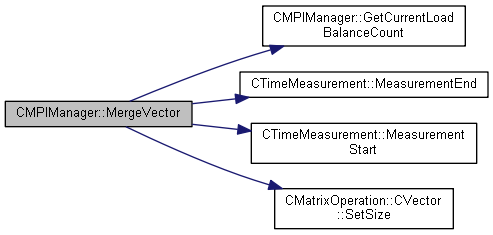

| static void | MergeVector (CMatrixOperation::CVector *pVector, CMatrixOperation::CVector *pResultVector, unsigned int nMergeSize, int nLBIndex) |

| Merge vector to sub rank. More... | |

| static void | MergeVector (CMatrixOperation::CVector *pVector, unsigned int nMergeSize, int nLBIndex) |

| Merge vector to sub rank. More... | |

| static void | MergeVectorOptimal (CMatrixOperation::CVector *pSrcVector, CMatrixOperation::CVector *pResultVector, unsigned int nMergeSize, double fFirstIndex, int nLBIndex) |

| Merge vector to sub rank, operated without vector class member function call. More... | |

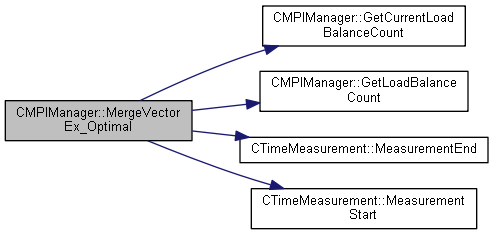

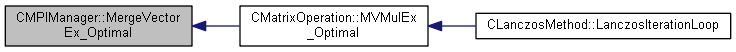

| static void | MergeVectorEx_Optimal (CMatrixOperation::CVector *pVector, CMatrixOperation::CVector *pResultVector, unsigned int nMergeSize, double fFirstIndex, unsigned int nSizeFromPrevRank, unsigned int nSizeFromNextRank, unsigned int nSizetoPrevRank, unsigned int nSizetoNextRank, unsigned int *mPos, int nLBIndex) |

| Merge vector for 1 layer exchanging. More... | |

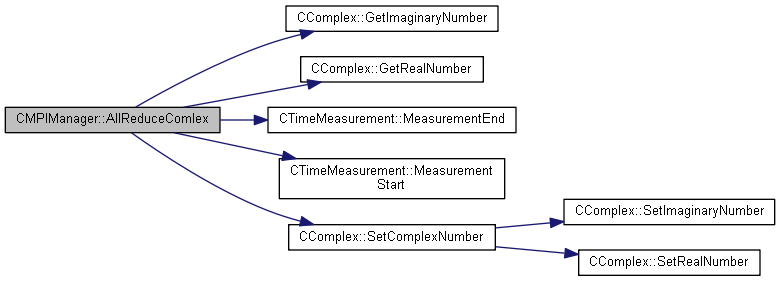

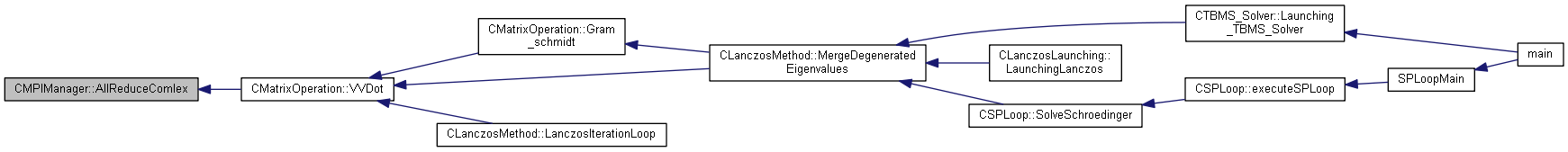

| static void | AllReduceComlex (CComplex *pNumber, CTimeMeasurement::MEASUREMENT_INDEX INDEX=CTimeMeasurement::COMM) |

| Do all reduce function with CKNComplex. More... | |

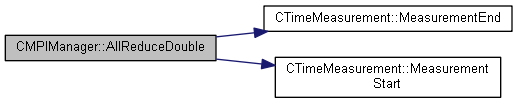

| static double | AllReduceDouble (double fNumber) |

| Do all reduce function with CKNComplex. More... | |

| static int | GetRootRank () |

| static void | FinalizeManager () |

| Get Root rank. More... | |

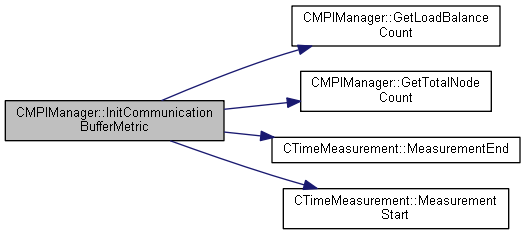

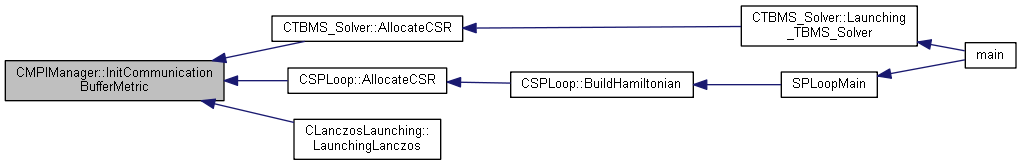

| static int | InitCommunicationBufferMetric () |

| Initializing MPI Communication buffer for MVMul. More... | |

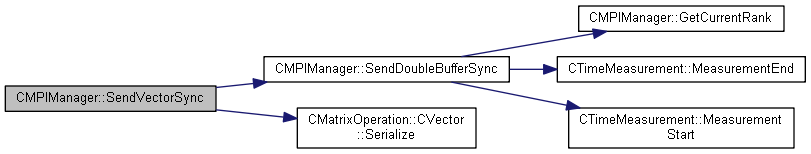

| static void | SendDoubleBufferSync (int nTargetRank, double *pBuffer, int nSize, MPI_Request *req, MPI_Comm commWorld=MPI_COMM_NULL) |

| Sending buffer for double data array with sync. More... | |

| static void | WaitSendDoubleBufferSync (MPI_Request *req) |

| Waiting sending double buffer sync function. More... | |

| static void | ReceiveDoubleBufferSync (int nSourceRank, double *pBuffer, int nSize, MPI_Request *req, MPI_Comm commWorld=MPI_COMM_NULL) |

| Receivinging buffer for double data array with sync. More... | |

| static void | WaitReceiveDoubleBufferAsync (MPI_Request *req) |

| Waiting recevinging double buffer sync function. More... | |

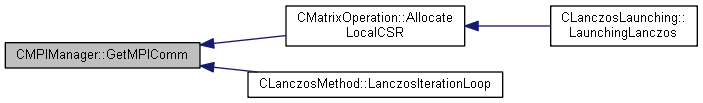

| static MPI_Comm | GetMPIComm () |

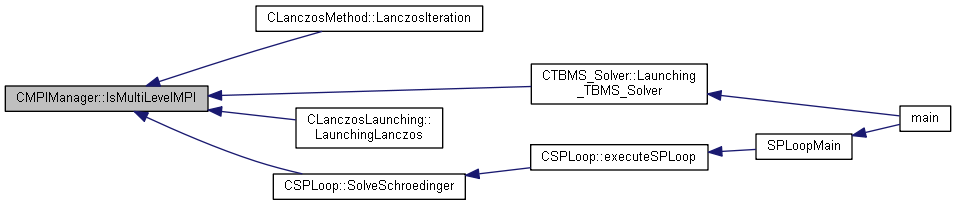

| static bool | IsMultiLevelMPI () |

| Get MPI_Comm. More... | |

| static void | BarrierAllComm () |

| Is Multilevel MPI Setting. More... | |

| static void | Barrier () |

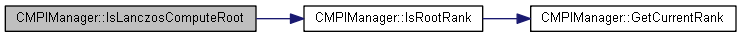

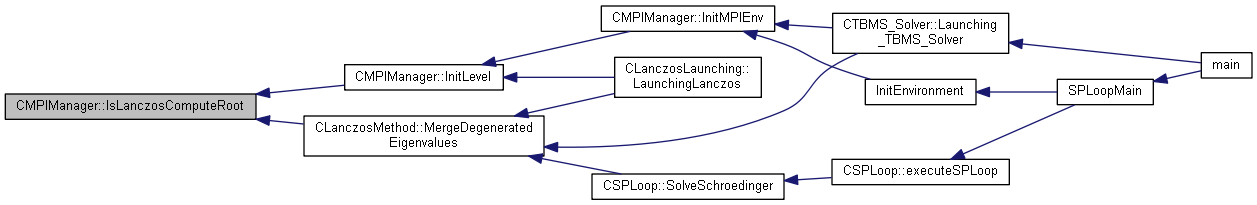

| static bool | IsLanczosComputeRoot () |

| Barrier current deflation group. More... | |

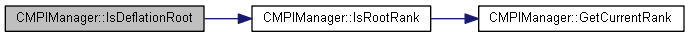

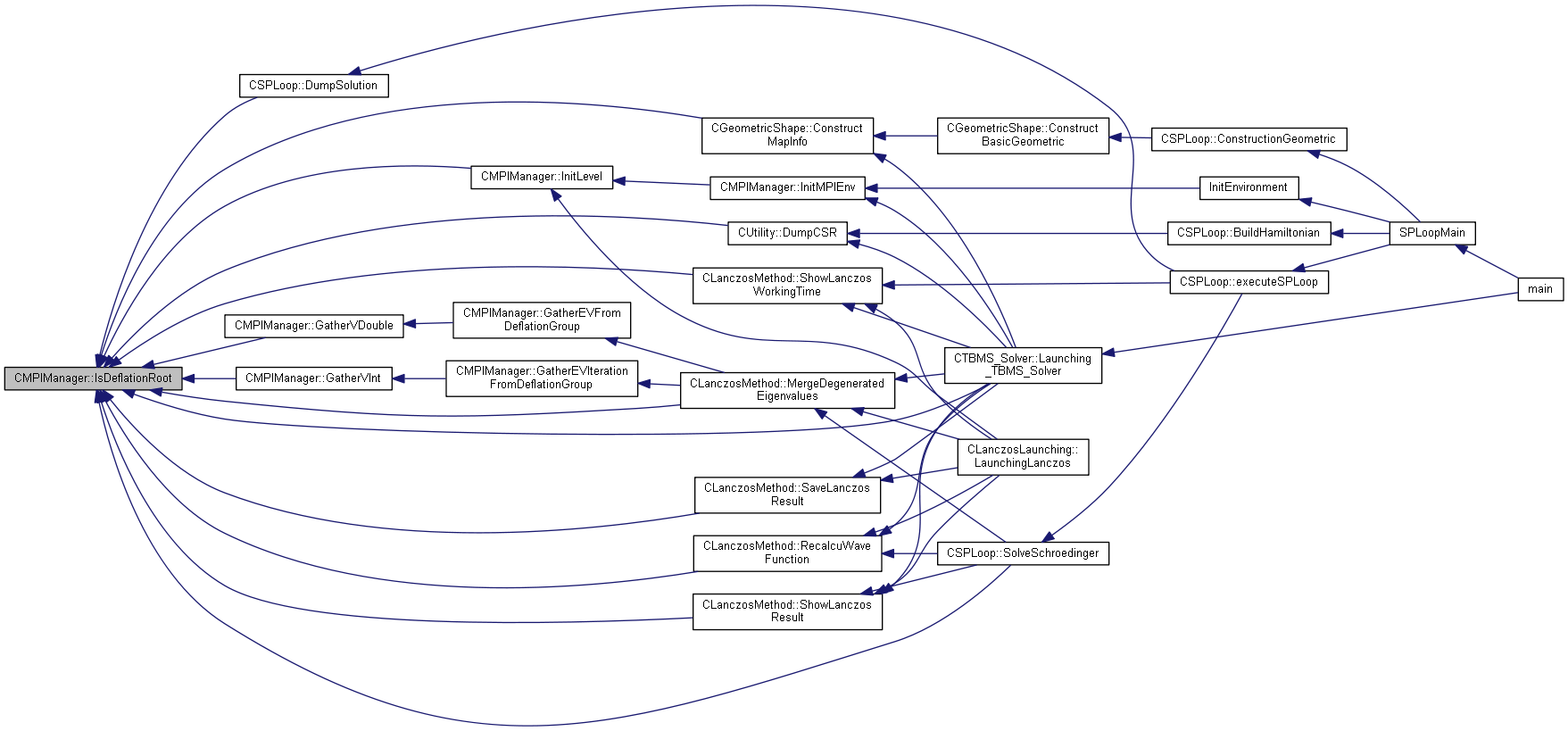

| static bool | IsDeflationRoot () |

| Checking is root rank of Lanczos computation. More... | |

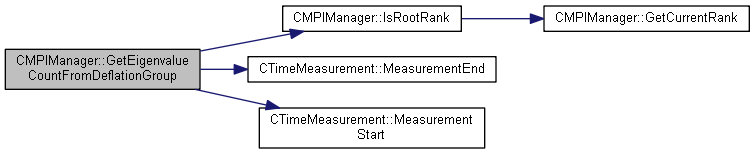

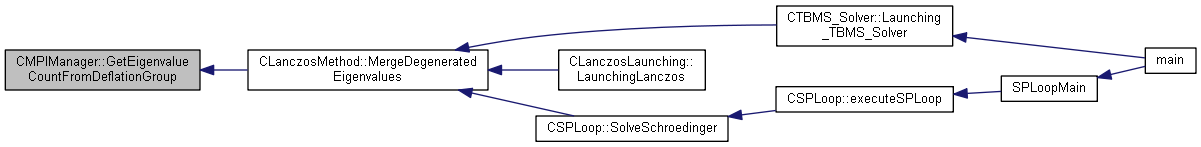

| static int * | GetEigenvalueCountFromDeflationGroup (int nDeflationGroupCount, int nLocalEVCount) |

| Checking is root rank of Deflation computation. More... | |

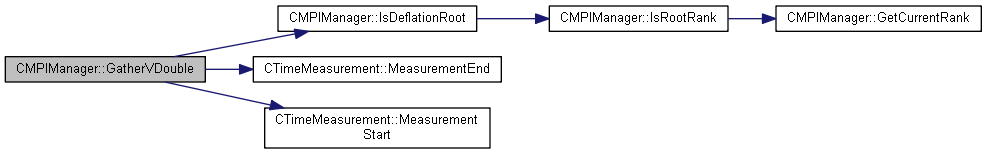

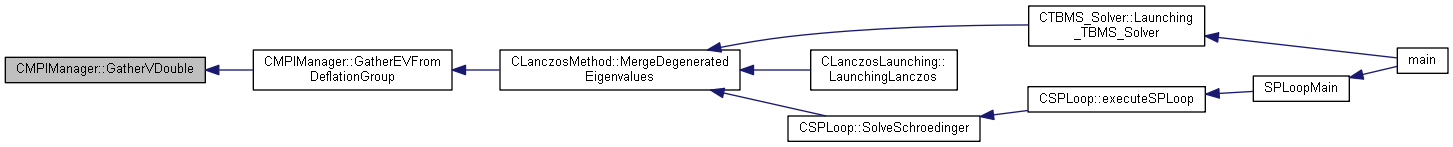

| static void | GatherVDouble (int nSourceCount, double *pReceiveBuffer, int *pSourceCount, double *pSendBuffer, int nSendCount, MPI_Comm comm=MPI_COMM_NULL) |

| GatherV for double wrapping function. More... | |

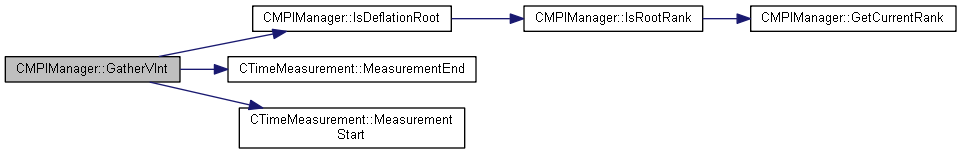

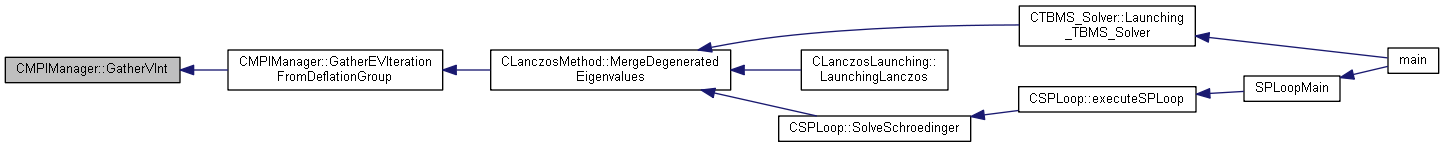

| static void | GatherVInt (int nSourceCount, int *pReceiveBuffer, int *pSourceCount, int *pSendBuffer, int nSendCount, MPI_Comm comm=MPI_COMM_NULL) |

| GahterV for int wrapping function. More... | |

| static void | GatherEVFromDeflationGroup (int nSourceCount, double *pReceiveBuffer, int *pSourceCount, double *pSendBuffer, int nSendCount) |

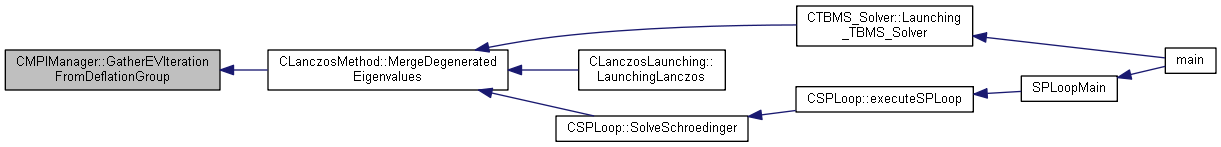

| static void | GatherEVIterationFromDeflationGroup (int nSourceCount, int *pReceiveBuffer, int *pSourceCount, int *pSendBuffer, int nSendCount) |

| Gather eigenvalue from deflation group. More... | |

| static void | ExchangeCommand (double *pfCommand, MPI_Comm comm) |

| Gather eigenvalue finding iteration number from deflation group. More... | |

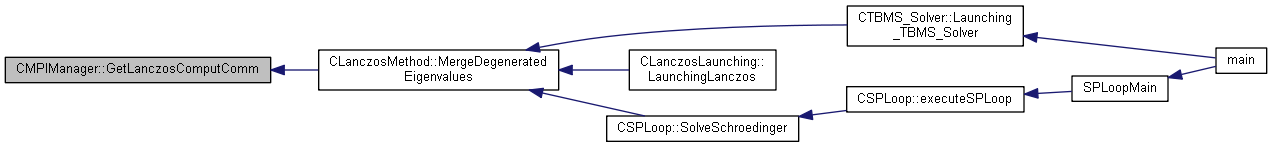

| static MPI_Comm | GetLanczosComputComm () |

| static MPI_Comm | GetDeflationComm () |

| Getting Lanczos computing group MPI_Comm. More... | |

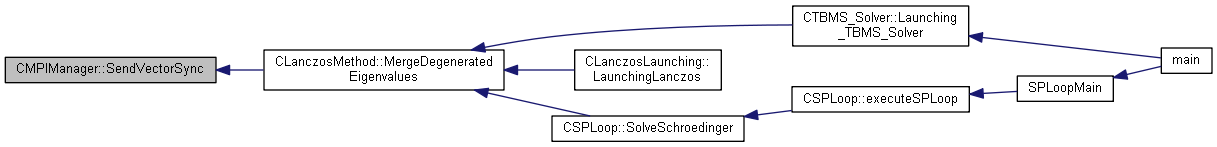

| static void | SendVectorSync (int nTargetRank, CMatrixOperation::CVector *pVector, int nSize, MPI_Request *req, MPI_Comm commWorld=MPI_COMM_NULL) |

| Getting Deflation computing group MPI_Comm. More... | |

| static void | ReceiveVectorSync (int nSourceRank, CMatrixOperation::CVector *pVector, int nSize, MPI_Request *req, MPI_Comm commWorld=MPI_COMM_NULL) |

| Receiving Vector with sync. More... | |

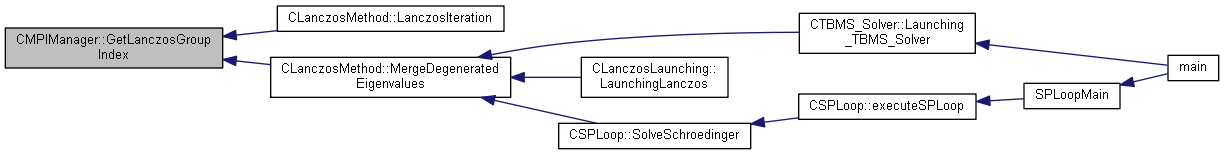

| static unsigned int | GetLanczosGroupIndex () |

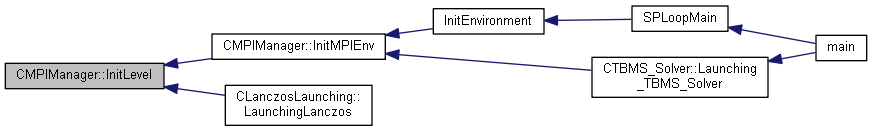

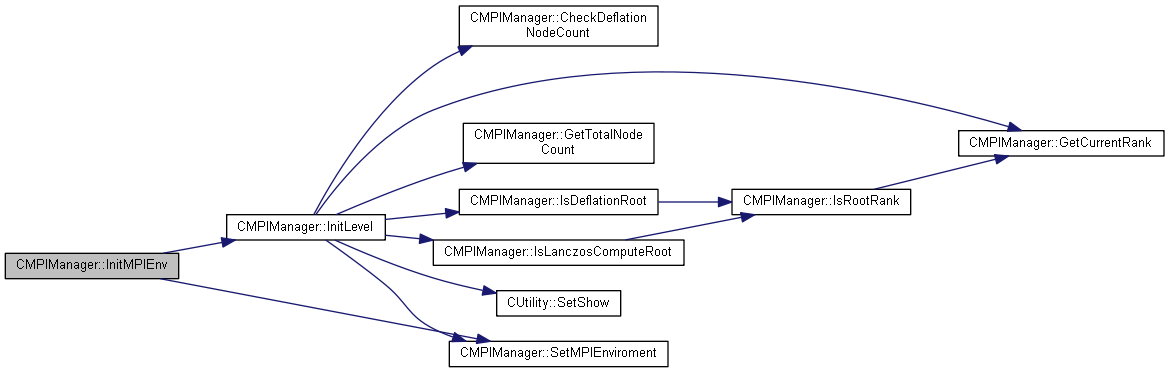

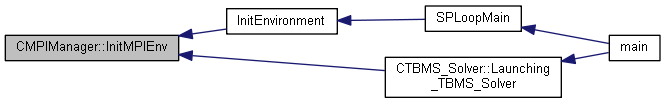

| static bool | InitMPIEnv (bool &bMPI, CCommandFileParser::LPINPUT_CMD_PARAM lpParam) |

| Getting Lanczos group index. More... | |

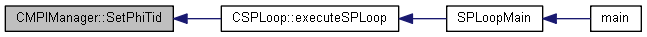

| static void | SetPhiTid (int *tid) |

Private Types | |

| typedef struct CMPIManager::COMPLEX_NUMBER * | LPCOMPLEX_NUMBER |

Static Private Attributes | |

| static int | m_nCurrentRank = 0 |

| MPI Rank. More... | |

| static int | m_nCommWorldRank = 0 |

| MPI Rank before split. More... | |

| static int | m_nTotalNode = 1 |

| Total node count. More... | |

| static bool | m_bStartMPI = false |

| MPI_Init call or not. More... | |

| static std::vector< int * > | m_vectLoadBalance |

| Load blancing for MPI Communication. More... | |

| static LPCOMPLEX_NUMBER | m_pCommBuffer = NULL |

| Data buffer for MPI Communication. More... | |

| static LPCOMPLEX_NUMBER | m_pConvertingBuffer = NULL |

| Data buffer for Vector converting. More... | |

| static std::vector< int * > | m_vectRecvCount |

| Reciving count variable for MPI comminication. More... | |

| static std::vector< int * > | m_vectSendCount |

| Sending count variable for MPI comminication. More... | |

| static int * | m_pBankInfo = NULL |

| After MPI Split bank infomation. More... | |

| static std::vector< int * > | m_vectDispls |

| Displ for MPI comminication. More... | |

| static MPI_Request | m_SendDoubleAsyncRequest = MPI_REQUEST_NULL |

| Request for sending double. More... | |

| static MPI_Request | m_ReceiveDoubleAsyncRequest = MPI_REQUEST_NULL |

| Request for receving double. More... | |

| static unsigned int | m_nMPILevel = 1 |

| MPI Level. More... | |

| static bool | m_bNeedPostOperation [10] = { false, false, false, false, false, false, false, false, false, false } |

| MPI Level. More... | |

| static MPI_Comm | m_mpiCommIndex = MPI_COMM_WORLD |

| Lanczos Method MPI_Comm. More... | |

| static MPI_Comm | m_deflationComm = MPI_COMM_NULL |

| Deflation computing MPI_Comm. More... | |

| static MPI_Group | m_lanczosGroup = MPI_GROUP_EMPTY |

| MPI Group for Lanczos computation. More... | |

| static MPI_Group | m_deflationGroup = MPI_GROUP_EMPTY |

| MPI Group for Deflation computation. More... | |

| static unsigned int | m_nLanczosGroupIndex = 0 |

| MPI Group index for Lanczos group. More... | |

| static bool | m_bMultiLevel = false |

| Flag for Multilevel MPI group. More... | |

| static int | m_nLBCount = 0 |

|

private |

| CMPIManager::CMPIManager | ( | ) |

| CMPIManager::~CMPIManager | ( | ) |

|

static |

Do all reduce function with CKNComplex.

| pNumber | Variable that want to sum |

| INDEX | Time measurement index |

Definition at line 571 of file MPIManager.cpp.

References CComplex::GetImaginaryNumber(), CComplex::GetRealNumber(), m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), CTimeMeasurement::MeasurementStart(), and CComplex::SetComplexNumber().

Referenced by CMatrixOperation::VVDot().

|

static |

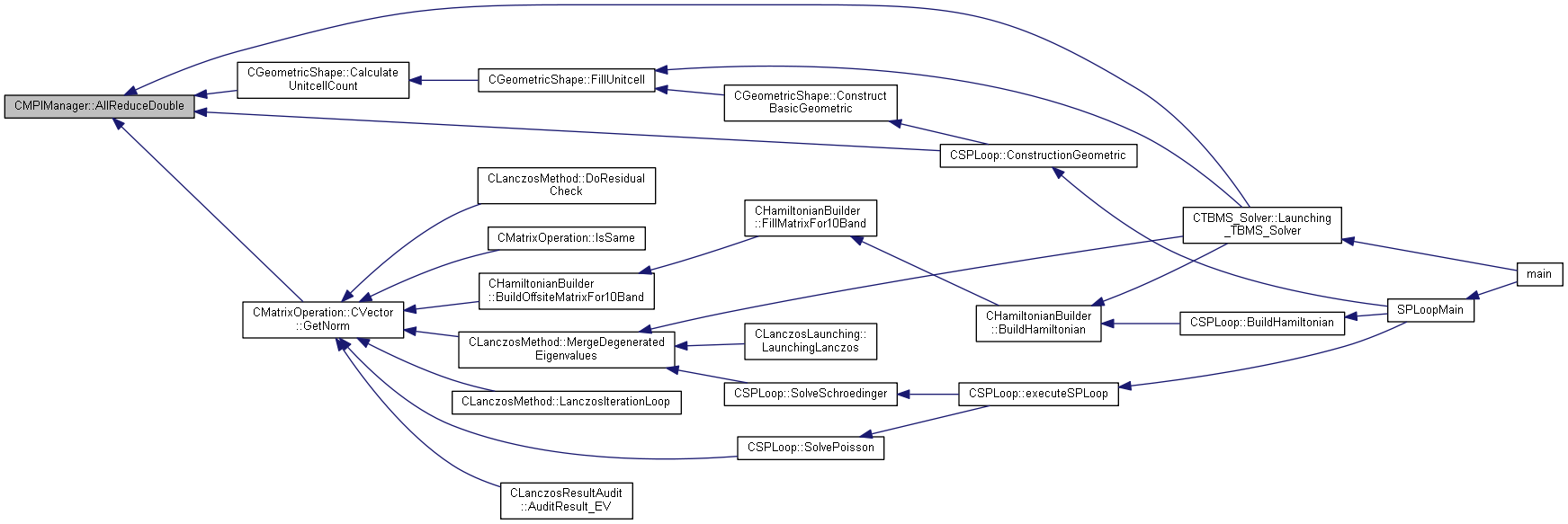

Do all reduce function with CKNComplex.

| fNumber | Variable that want to sum |

Definition at line 593 of file MPIManager.cpp.

References CTimeMeasurement::COMM, m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by CGeometricShape::CalculateUnitcellCount(), CSPLoop::ConstructionGeometric(), CMatrixOperation::CVector::GetNorm(), and CTBMS_Solver::Launching_TBMS_Solver().

|

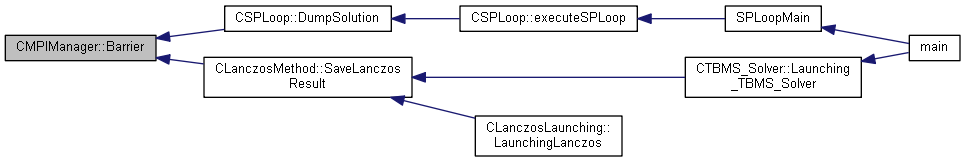

inlinestatic |

Definition at line 67 of file MPIManager.h.

References m_mpiCommIndex.

Referenced by CSPLoop::DumpSolution(), and CLanczosMethod::SaveLanczosResult().

|

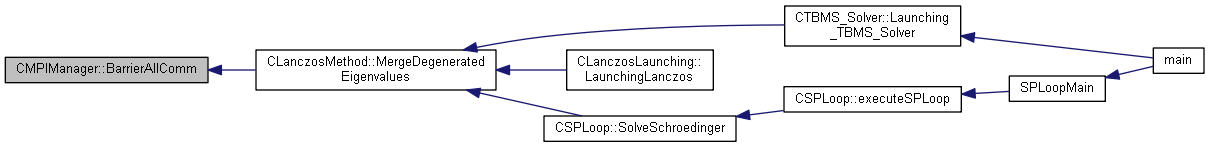

static |

Is Multilevel MPI Setting.

Barrier MPI_COMM_WORLD

< Caution! This function wait all rank even if Comm split into several colors

Definition at line 733 of file MPIManager.cpp.

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

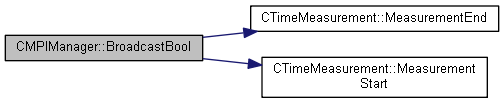

static |

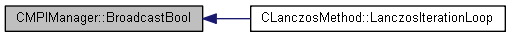

Broadcst boolean value.

| boolValue | bool variable for want to broadcasting |

| nRootRank | Root rank index |

Definition at line 470 of file MPIManager.cpp.

References CTimeMeasurement::COMM, m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by CLanczosMethod::LanczosIterationLoop().

|

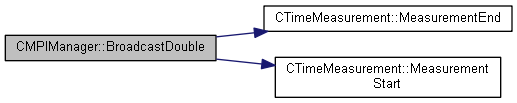

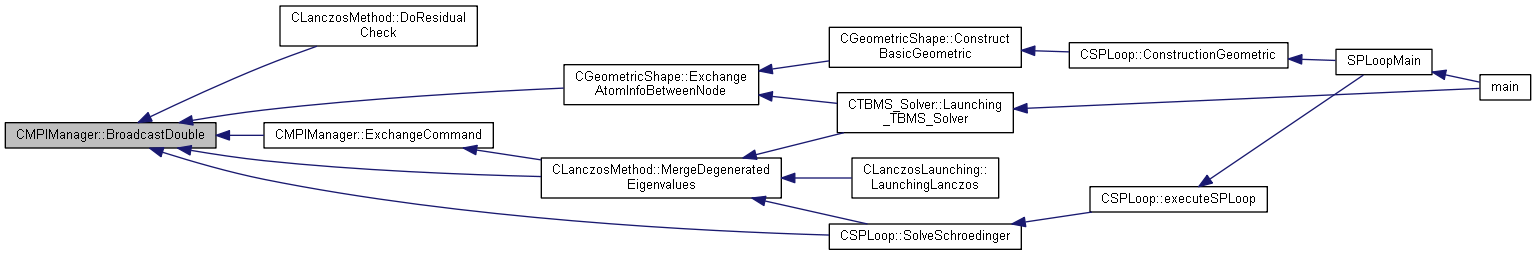

static |

Broadcst boolean value.

| pValue | double data buffer for want to broadcasting |

| nSize | Data buffer size |

| nRootRank | Root rank index |

Definition at line 486 of file MPIManager.cpp.

References CTimeMeasurement::COMM, m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by CLanczosMethod::DoResidualCheck(), CGeometricShape::ExchangeAtomInfoBetweenNode(), ExchangeCommand(), CLanczosMethod::MergeDegeneratedEigenvalues(), and CSPLoop::SolveSchroedinger().

|

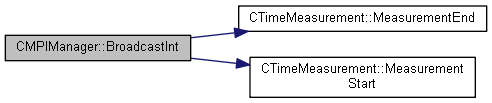

static |

Broadcst boolean value.

| [in,out] | pValue | Broadcasting variable |

| nSize | Broadcasting variable size | |

| nRootRank | Root rank number | |

| comm | Broadcating MPI_Comm range |

Definition at line 506 of file MPIManager.cpp.

References CTimeMeasurement::COMM, m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

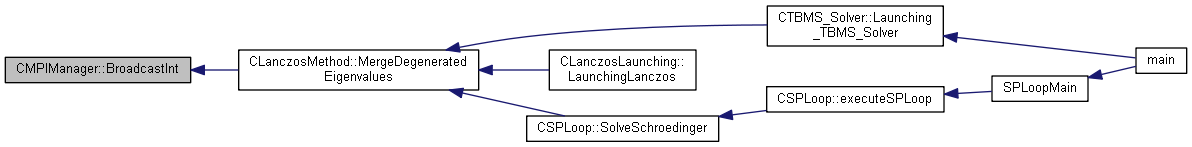

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

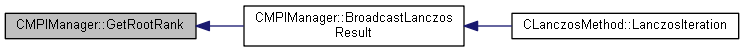

static |

Broadcast Lanczos result.

| lpResult | Lanczos method result that want to boardcasting |

| nIterationCount | Current iteration count |

Definition at line 524 of file MPIManager.cpp.

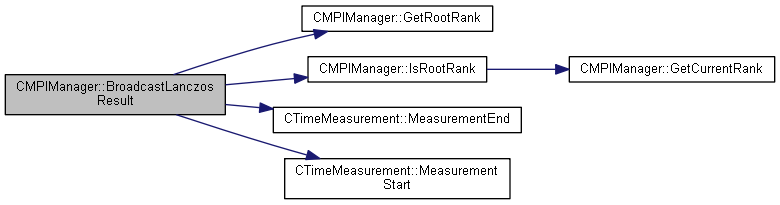

References CTimeMeasurement::COMM, GetRootRank(), IsRootRank(), m_mpiCommIndex, CTimeMeasurement::MALLOC, CTimeMeasurement::MeasurementEnd(), CTimeMeasurement::MeasurementStart(), CLanczosMethod::EIGENVALUE_RESULT::nEigenValueCount, CLanczosMethod::EIGENVALUE_RESULT::nEigenValueCountForMemeory, CLanczosMethod::EIGENVALUE_RESULT::nEigenVectorSize, CLanczosMethod::EIGENVALUE_RESULT::nMaxEigenValueFoundIteration, CLanczosMethod::EIGENVALUE_RESULT::pEigenValueFoundIteration, and CLanczosMethod::EIGENVALUE_RESULT::pEigenVectors.

Referenced by CLanczosMethod::LanczosIteration().

|

static |

Check this processing running on MPI Enviorment.

Broadcast vector to sub rank

| pVector | Vector for want to broadcast |

Definition at line 228 of file MPIManager.cpp.

References IsInMPIRoutine().

|

static |

Checking node counts fix to deflation group.

| nNeedNodeCount | Deflation group count |

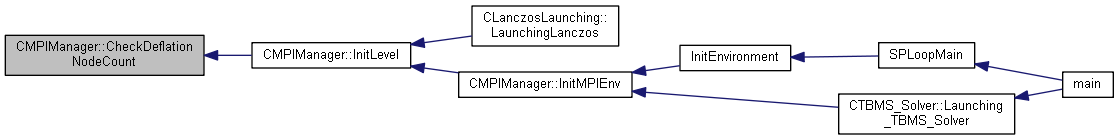

Definition at line 129 of file MPIManager.cpp.

References m_nTotalNode.

Referenced by InitLevel().

|

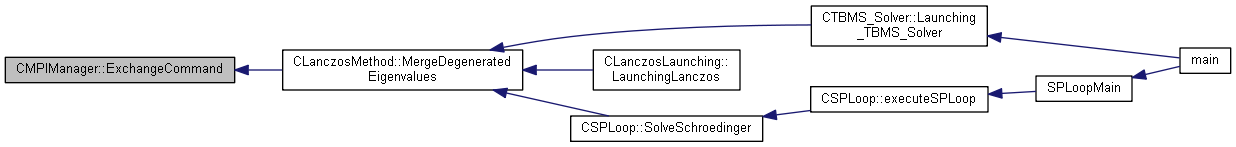

static |

Gather eigenvalue finding iteration number from deflation group.

Exchanging command between MPI_Comm

| pfCommand | Command buffer |

| comm | MPI_Comm for exchaning command |

Definition at line 826 of file MPIManager.cpp.

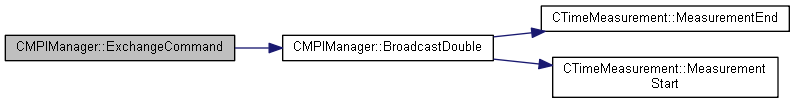

References BroadcastDouble(), and COMMAND_SIZE.

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

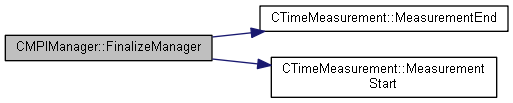

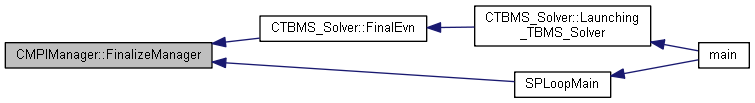

static |

Get Root rank.

Release all memory

Definition at line 617 of file MPIManager.cpp.

References FREE_MEM, CTimeMeasurement::FREE_MEM, m_bStartMPI, m_deflationComm, m_deflationGroup, m_lanczosGroup, m_mpiCommIndex, m_nCurrentRank, m_nLBCount, m_nTotalNode, m_pBankInfo, m_vectDispls, m_vectLoadBalance, m_vectRecvCount, m_vectSendCount, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by CTBMS_Solver::FinalEvn(), and SPLoopMain().

|

inlinestatic |

Definition at line 73 of file MPIManager.h.

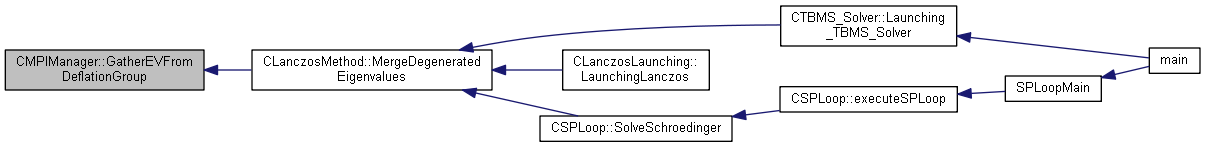

References GatherVDouble(), and m_deflationComm.

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

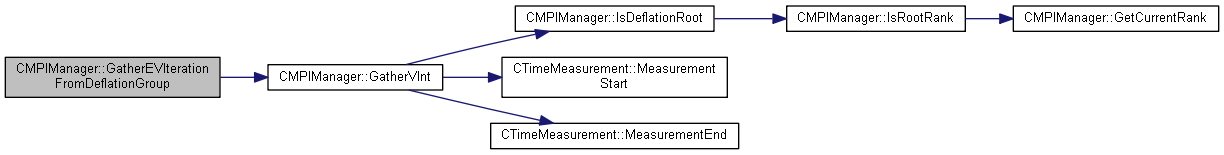

inlinestatic |

Gather eigenvalue from deflation group.

Definition at line 74 of file MPIManager.h.

References GatherVInt(), and m_deflationComm.

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

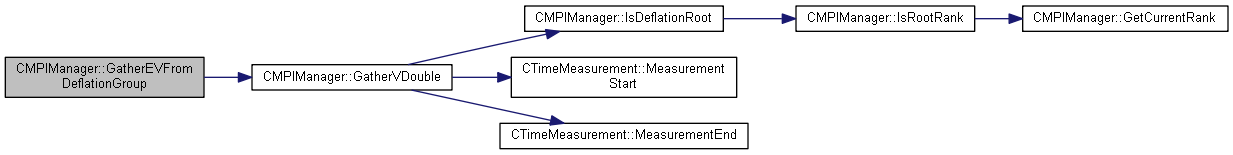

static |

GatherV for double wrapping function.

| nSourceCount | Gather double buffer source count | |

| [out] | pReceiveBuffer | Saving buffer |

| pSourceCount | Srouce counts (ref. MPI_Gatherv) | |

| pSendBuffer | Sending buffer | |

| nSendCount | Sending counts | |

| comm | MPI_Comm for gather data |

Definition at line 766 of file MPIManager.cpp.

References CTimeMeasurement::COMM, FREE_MEM, IsDeflationRoot(), m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by GatherEVFromDeflationGroup().

|

static |

GahterV for int wrapping function.

| nSourceCount | Gather double buffer source count | |

| [out] | pReceiveBuffer | Saving buffer |

| pSourceCount | Srouce counts (ref. MPI_Gatherv) | |

| pSendBuffer | Sending buffer | |

| nSendCount | Sending counts | |

| comm | MPI_Comm for gather data |

Definition at line 798 of file MPIManager.cpp.

References CTimeMeasurement::COMM, FREE_MEM, IsDeflationRoot(), m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by GatherEVIterationFromDeflationGroup().

|

static |

Get Current node's rank load balancing number.

Definition at line 608 of file MPIManager.cpp.

References m_nCurrentRank, and m_vectLoadBalance.

Referenced by CLanczosMethod::BuildWaveFunction(), CLanczosMethod::CalculateEigenVector(), CLanczosMethod::DoResidualCheck(), CSPLoop::executeSPLoop(), CLanczosMethod::InitLanczosVector(), CLanczosMethod::LanczosIteration(), CLanczosMethod::LanczosIterationLoop(), CLanczosMethod::MergeDegeneratedEigenvalues(), MergeVector(), MergeVectorEx_Optimal(), MergeVectorOptimal(), CLanczosMethod::RecalcuWaveFunction(), and CLanczosMethod::SortSolution().

|

inlinestatic |

Definition at line 40 of file MPIManager.h.

References m_nCurrentRank.

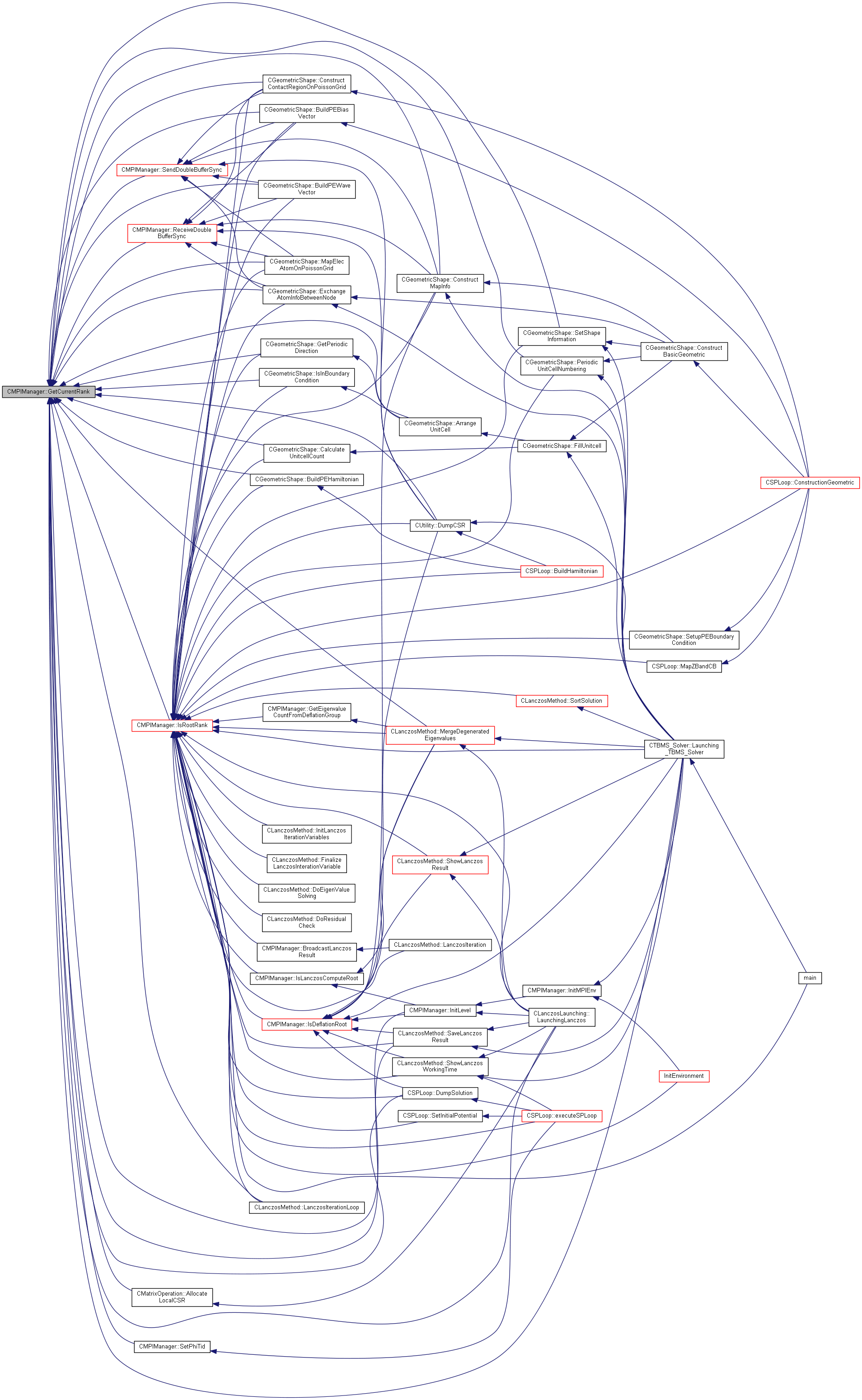

Referenced by CMatrixOperation::AllocateLocalCSR(), CGeometricShape::ArrangeUnitCell(), CGeometricShape::BuildPEBiasVector(), CGeometricShape::BuildPEHamiltonian(), CGeometricShape::BuildPEWaveVector(), CGeometricShape::CalculateUnitcellCount(), CGeometricShape::ConstructContactRegionOnPoissonGrid(), CGeometricShape::ConstructMapInfo(), CUtility::DumpCSR(), CSPLoop::DumpSolution(), CGeometricShape::ExchangeAtomInfoBetweenNode(), CGeometricShape::GetPeriodicDirection(), InitLevel(), CGeometricShape::IsInBoundaryCondition(), IsRootRank(), CLanczosMethod::LanczosIterationLoop(), CTBMS_Solver::Launching_TBMS_Solver(), CLanczosLaunching::LaunchingLanczos(), CGeometricShape::MapElecAtomOnPoissonGrid(), CLanczosMethod::MergeDegeneratedEigenvalues(), CGeometricShape::PeriodicUnitCellNumbering(), ReceiveDoubleBufferSync(), CLanczosMethod::SaveLanczosResult(), SendDoubleBufferSync(), SetPhiTid(), and CGeometricShape::SetShapeInformation().

|

static |

Get Current node's rank number.

Get Current node's rank number

| comm | MPI_Comm |

Definition at line 216 of file MPIManager.cpp.

|

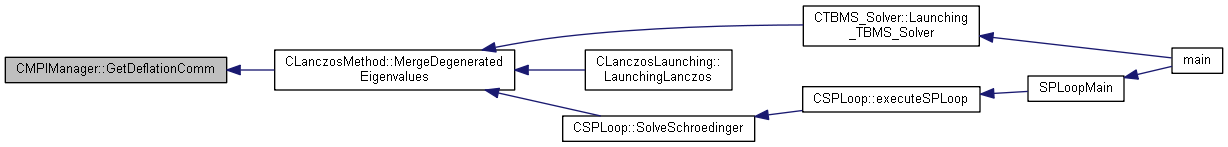

inlinestatic |

Getting Lanczos computing group MPI_Comm.

Definition at line 77 of file MPIManager.h.

References m_deflationComm.

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

static |

Checking is root rank of Deflation computation.

Collecting total eigenvalue count from All deflation group

| nDeflationGroupCount | Deflation group counts |

| nLocalEVCount | Local deflation group eigenvalue counts |

Definition at line 744 of file MPIManager.cpp.

References CTimeMeasurement::COMM, IsRootRank(), m_deflationComm, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

inlinestatic |

Definition at line 76 of file MPIManager.h.

References m_mpiCommIndex.

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

inlinestatic |

Definition at line 80 of file MPIManager.h.

References m_nLanczosGroupIndex.

Referenced by CLanczosMethod::LanczosIteration(), and CLanczosMethod::MergeDegeneratedEigenvalues().

|

static |

| nRank | Target rank index |

Definition at line 168 of file MPIManager.cpp.

References m_nTotalNode, and m_vectLoadBalance.

Referenced by CMatrixOperation::AllocateLocalCSR(), InitCommunicationBufferMetric(), MergeVectorEx_Optimal(), and MergeVectorOptimal().

|

inlinestatic |

Definition at line 64 of file MPIManager.h.

References m_mpiCommIndex.

Referenced by CMatrixOperation::AllocateLocalCSR(), and CLanczosMethod::LanczosIterationLoop().

|

inlinestatic |

Definition at line 57 of file MPIManager.h.

Referenced by BroadcastLanczosResult().

|

inlinestatic |

Definition at line 42 of file MPIManager.h.

References m_nTotalNode.

Referenced by CMatrixOperation::AllocateLocalCSR(), CGeometricShape::ArrangeUnitCell(), CGeometricShape::BuildPEBiasVector(), CGeometricShape::BuildPEWaveVector(), CGeometricShape::CalculateUnitcellCount(), CGeometricShape::ConstructContactRegionOnPoissonGrid(), CGeometricShape::ConstructMapInfo(), CUtility::DumpCSR(), CSPLoop::DumpSolution(), CGeometricShape::ExchangeAtomInfoBetweenNode(), CGeometricShape::GetPeriodicDirection(), InitCommunicationBufferMetric(), InitEnvironment(), InitLevel(), CGeometricShape::IsInBoundaryCondition(), CLanczosMethod::LanczosIterationLoop(), CTBMS_Solver::Launching_TBMS_Solver(), CLanczosLaunching::LaunchingLanczos(), CGeometricShape::MapElecAtomOnPoissonGrid(), CLanczosMethod::MergeDegeneratedEigenvalues(), CMatrixOperation::MVMulEx_Optimal(), CGeometricShape::PeriodicUnitCellNumbering(), CLanczosMethod::SaveLanczosResult(), CGeometricShape::SetShapeInformation(), and CLanczosMethod::ShowLanczosWorkingTime().

|

static |

Initializing MPI Communication buffer for MVMul.

| nMatrixSize | Matrix size that want to solving |

Definition at line 655 of file MPIManager.cpp.

References GetLoadBalanceCount(), GetTotalNodeCount(), m_nLBCount, m_vectDispls, m_vectRecvCount, m_vectSendCount, CTimeMeasurement::MALLOC, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by CTBMS_Solver::AllocateCSR(), CSPLoop::AllocateCSR(), and CLanczosLaunching::LaunchingLanczos().

|

static |

Init MPI Level, most low level is for multi node cacluation for Lanczos.

| nMPILevel | MPI level count |

| nFindingDegeneratedEVCount | Deflation group count |

< First make group for lanczos method

< Second make group for deflation lanczos - vertical connected group

Definition at line 53 of file MPIManager.cpp.

References CheckDeflationNodeCount(), FREE_MEM, GetCurrentRank(), GetTotalNodeCount(), IsDeflationRoot(), IsLanczosComputeRoot(), m_bMultiLevel, m_bNeedPostOperation, m_deflationComm, m_deflationGroup, m_lanczosGroup, m_mpiCommIndex, m_nCommWorldRank, m_nLanczosGroupIndex, SetMPIEnviroment(), and CUtility::SetShow().

Referenced by InitMPIEnv(), and CLanczosLaunching::LaunchingLanczos().

|

static |

Getting Lanczos group index.

Initialization of MPI environment

| bMPI | Running with MPI enviroment or not |

| lpParam | Option parameters for program launching |

Definition at line 875 of file MPIManager.cpp.

References InitLevel(), CCommandFileParser::INPUT_CMD_PARAM::nFindingDegeneratedEVCount, CCommandFileParser::INPUT_CMD_PARAM::nMPILevel, and SetMPIEnviroment().

Referenced by InitEnvironment(), and CTBMS_Solver::Launching_TBMS_Solver().

|

inlinestatic |

Checking is root rank of Lanczos computation.

Definition at line 69 of file MPIManager.h.

References IsRootRank(), and m_deflationComm.

Referenced by CGeometricShape::ConstructMapInfo(), CUtility::DumpCSR(), CSPLoop::DumpSolution(), GatherVDouble(), GatherVInt(), InitLevel(), CTBMS_Solver::Launching_TBMS_Solver(), CLanczosMethod::MergeDegeneratedEigenvalues(), CLanczosMethod::RecalcuWaveFunction(), CLanczosMethod::SaveLanczosResult(), CLanczosMethod::ShowLanczosResult(), CLanczosMethod::ShowLanczosWorkingTime(), and CSPLoop::SolveSchroedinger().

|

inlinestatic |

Definition at line 45 of file MPIManager.h.

References m_bStartMPI.

Referenced by BroadcastVector().

|

inlinestatic |

Barrier current deflation group.

Definition at line 68 of file MPIManager.h.

References IsRootRank(), and m_mpiCommIndex.

Referenced by InitLevel(), and CLanczosMethod::MergeDegeneratedEigenvalues().

|

inlinestatic |

Get MPI_Comm.

Definition at line 65 of file MPIManager.h.

References m_bMultiLevel.

Referenced by CLanczosMethod::LanczosIteration(), CTBMS_Solver::Launching_TBMS_Solver(), CLanczosLaunching::LaunchingLanczos(), and CSPLoop::SolveSchroedinger().

|

static |

Get Total node count.

Check this node is root rank?

Definition at line 182 of file MPIManager.cpp.

References GetCurrentRank().

Referenced by CTBMS_Solver::ApplyPhPotential(), BroadcastLanczosResult(), CSPLoop::BuildHamiltonian(), CGeometricShape::BuildPEBiasVector(), CGeometricShape::BuildPEHamiltonian(), CGeometricShape::BuildPEWaveVector(), CGeometricShape::CalculateUnitcellCount(), CGeometricShape::ConstructContactRegionOnPoissonGrid(), CSPLoop::ConstructionGeometric(), CGeometricShape::ConstructMapInfo(), CLanczosMethod::DoEigenValueSolving(), CLanczosMethod::DoResidualCheck(), CUtility::DumpCSR(), CSPLoop::DumpSolution(), CGeometricShape::ExchangeAtomInfoBetweenNode(), CSPLoop::executeSPLoop(), CLanczosMethod::FinalizeLanczosInterationVariable(), GetEigenvalueCountFromDeflationGroup(), CGeometricShape::GetPeriodicDirection(), InitEnvironment(), CLanczosMethod::InitLanczosIterationVariables(), IsDeflationRoot(), CGeometricShape::IsInBoundaryCondition(), IsLanczosComputeRoot(), CLanczosMethod::LanczosIteration(), CLanczosMethod::LanczosIterationLoop(), CTBMS_Solver::Launching_TBMS_Solver(), CLanczosLaunching::LaunchingLanczos(), main(), CGeometricShape::MapElecAtomOnPoissonGrid(), CSPLoop::MapZBandCB(), CLanczosMethod::MergeDegeneratedEigenvalues(), CGeometricShape::PeriodicUnitCellNumbering(), CLanczosMethod::SaveLanczosResult(), CSPLoop::SetInitialPotential(), CGeometricShape::SetShapeInformation(), CGeometricShape::SetupPEBoundaryCondition(), CLanczosMethod::ShowLanczosResult(), CLanczosMethod::ShowLanczosWorkingTime(), CSPLoop::SolvePoisson(), CSPLoop::SolveSchroedinger(), and CLanczosMethod::SortSolution().

|

static |

Check this node is root rank in 'comm' MPI_Comm.

| comm | MPI_Comm |

Definition at line 201 of file MPIManager.cpp.

References GetCurrentRank().

|

static |

Load blancing for MPI, This function for lanczos solving with geometric constrcution.

| nElementCount | Load balancing count |

Definition at line 152 of file MPIManager.cpp.

References m_mpiCommIndex, m_nLBCount, m_nTotalNode, and m_vectLoadBalance.

Referenced by CTBMS_Solver::AllocateCSR(), and CSPLoop::AllocateCSR().

|

static |

Merge vector to sub rank.

| pVector | Vector for sharing | |

| [out] | pResultVector | Vector for saving merging result |

| nMergeSize | Vector size that after mergsing |

Definition at line 241 of file MPIManager.cpp.

References FREE_MEM, GetCurrentLoadBalanceCount(), m_mpiCommIndex, m_vectDispls, m_vectRecvCount, CMatrixOperation::CVector::m_vectValueImaginaryBuffer, CMatrixOperation::CVector::m_vectValueRealBuffer, CTimeMeasurement::MeasurementEnd(), CTimeMeasurement::MeasurementStart(), and CTimeMeasurement::MV_COMM.

Referenced by CMatrixOperation::MVMul().

|

static |

Merge vector to sub rank.

| [in,out] | pVector | Vector for sharing and merging |

| nMergeSize | Vector size that after mergsing |

<Modified by jhkang, previous version has an error>

<Modified by="" jhkang="" end>="">

Definition at line 275 of file MPIManager.cpp.

References CTimeMeasurement::COMM, FREE_MEM, GetCurrentLoadBalanceCount(), m_vectDispls, m_vectRecvCount, CMatrixOperation::CVector::m_vectValueImaginaryBuffer, CMatrixOperation::CVector::m_vectValueRealBuffer, CTimeMeasurement::MeasurementEnd(), CTimeMeasurement::MeasurementStart(), and CMatrixOperation::CVector::SetSize().

|

static |

Merge vector for 1 layer exchanging.

| pSrcVector | Vector for sharing | |

| [out] | pResultVector | Vector for saving merging result |

| nMergeSize | Vector size that after mergsing | |

| fFirstIndex | First index for local vector index | |

| nSizeFromPrevRank | Exchanging size from previous node | |

| nSizeFromNextRank | Exchanging size from next node | |

| nSizetoPrevRank | Exchanging size to previous node | |

| nSizetoNextRank | Exchanging size to next node | |

| mPos | Previous, local, next node start index |

Definition at line 320 of file MPIManager.cpp.

References GetCurrentLoadBalanceCount(), GetLoadBalanceCount(), m_mpiCommIndex, m_nCurrentRank, m_nTotalNode, m_vectDispls, m_vectRecvCount, CMatrixOperation::CVector::m_vectValueImaginaryBuffer, CMatrixOperation::CVector::m_vectValueRealBuffer, CTimeMeasurement::MeasurementEnd(), CTimeMeasurement::MeasurementStart(), and CTimeMeasurement::MV_COMM.

Referenced by CMatrixOperation::MVMulEx_Optimal().

|

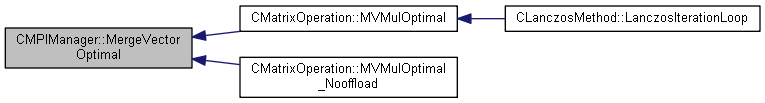

static |

Merge vector to sub rank, operated without vector class member function call.

| pSrcVector | Vector for sharing | |

| [out] | pResultVector | Vector for saving merging result |

| nMergeSize | Vector size that after mergsing | |

| fFirstIndex | First index for local vector index |

Definition at line 392 of file MPIManager.cpp.

References FREE_MEM, GetCurrentLoadBalanceCount(), GetLoadBalanceCount(), m_mpiCommIndex, m_nCurrentRank, m_nTotalNode, m_vectDispls, m_vectRecvCount, CMatrixOperation::CVector::m_vectValueImaginaryBuffer, CMatrixOperation::CVector::m_vectValueRealBuffer, CTimeMeasurement::MeasurementEnd(), CTimeMeasurement::MeasurementStart(), CTimeMeasurement::MV_COMM, CTimeMeasurement::MV_FREE_MEM, and CTimeMeasurement::MV_MALLOC.

Referenced by CMatrixOperation::MVMulOptimal(), and CMatrixOperation::MVMulOptimal_Nooffload().

|

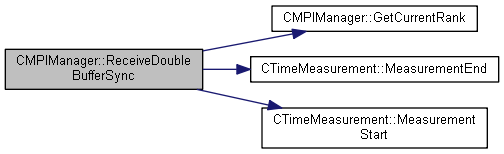

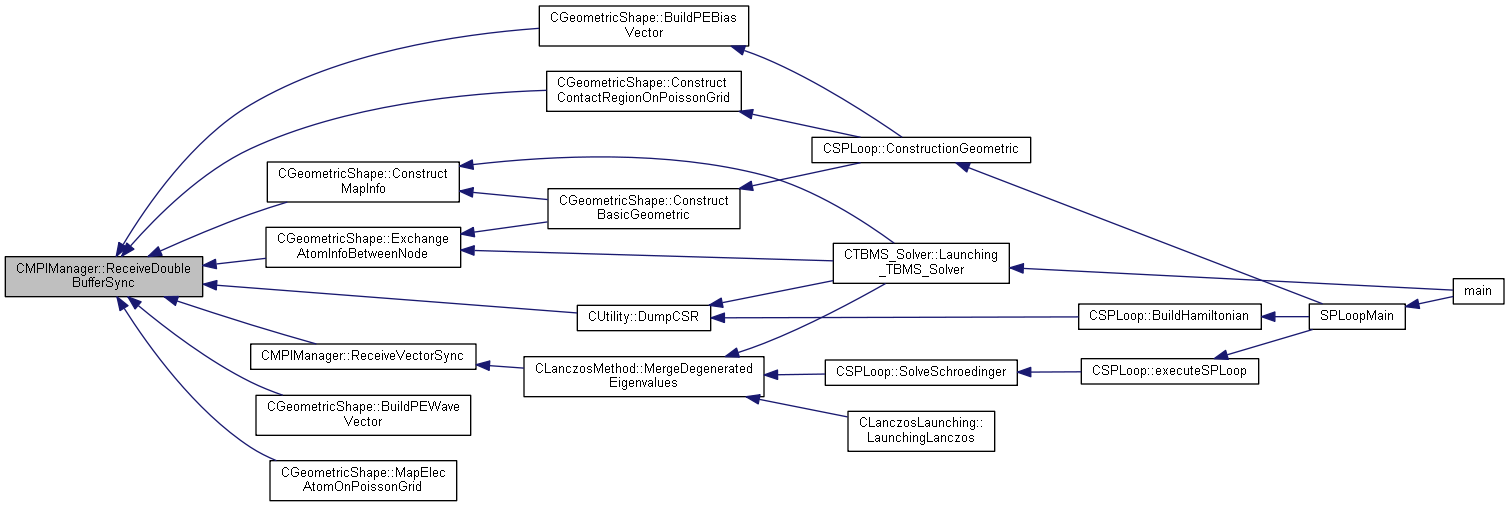

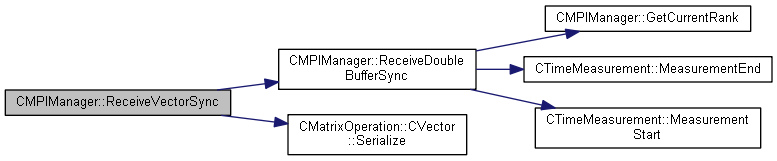

static |

Receivinging buffer for double data array with sync.

| nSourceRank | Source rank index |

| pBuffer | Data buffer that want to receive |

| nSize | Data buffer size |

| req | MPI request parameter |

Definition at line 712 of file MPIManager.cpp.

References CTimeMeasurement::COMM, GetCurrentRank(), m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by CGeometricShape::BuildPEBiasVector(), CGeometricShape::BuildPEWaveVector(), CGeometricShape::ConstructContactRegionOnPoissonGrid(), CGeometricShape::ConstructMapInfo(), CUtility::DumpCSR(), CGeometricShape::ExchangeAtomInfoBetweenNode(), CGeometricShape::MapElecAtomOnPoissonGrid(), and ReceiveVectorSync().

|

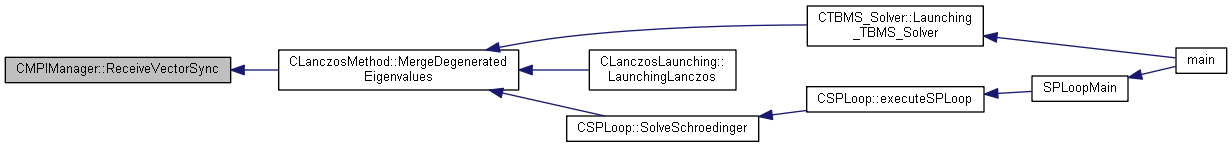

static |

Receiving Vector with sync.

| nSourceRank | Source rank for receiving data |

| pVector | Receiving buffer |

| nSize | Receiving size |

| req | MPI_Request for MPI_Recv |

| commWorld | MPI_Comm for Receiving data |

Definition at line 858 of file MPIManager.cpp.

References FREE_MEM, ReceiveDoubleBufferSync(), and CMatrixOperation::CVector::Serialize().

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

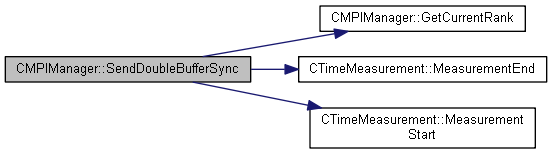

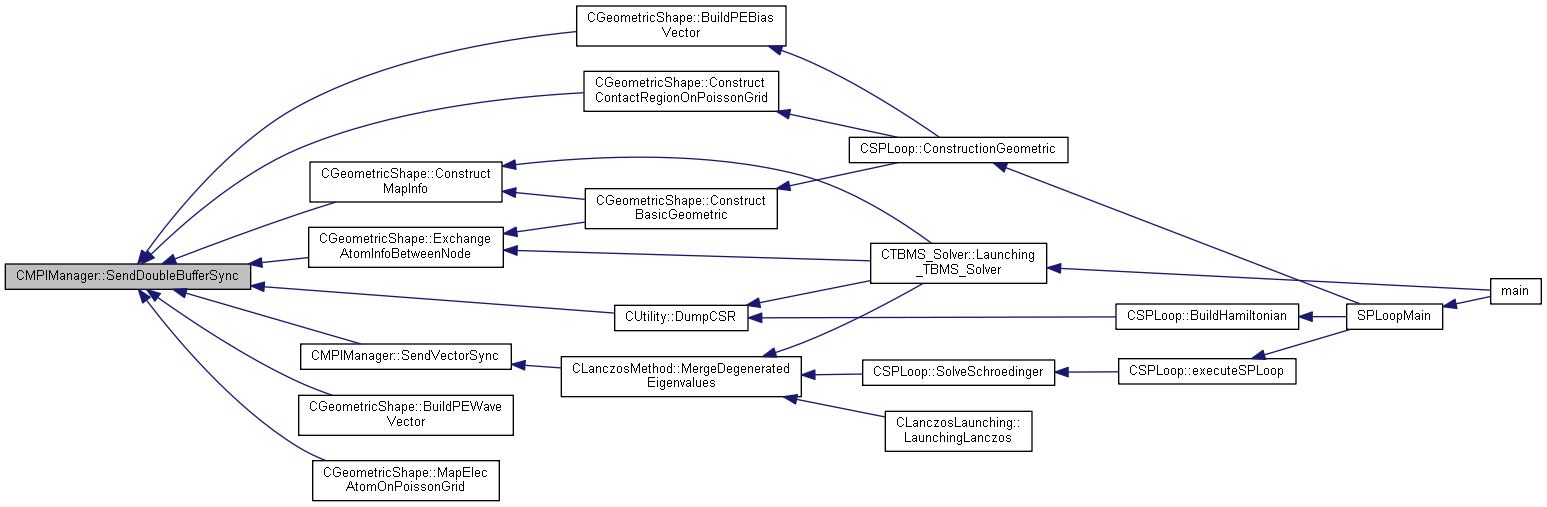

static |

Sending buffer for double data array with sync.

| nTargetRank | Target rank index |

| pBuffer | Data buffer that want to send |

| nSize | Data buffer size |

| req | MPI request parameter |

Definition at line 685 of file MPIManager.cpp.

References CTimeMeasurement::COMM, GetCurrentRank(), m_mpiCommIndex, CTimeMeasurement::MeasurementEnd(), and CTimeMeasurement::MeasurementStart().

Referenced by CGeometricShape::BuildPEBiasVector(), CGeometricShape::BuildPEWaveVector(), CGeometricShape::ConstructContactRegionOnPoissonGrid(), CGeometricShape::ConstructMapInfo(), CUtility::DumpCSR(), CGeometricShape::ExchangeAtomInfoBetweenNode(), CGeometricShape::MapElecAtomOnPoissonGrid(), and SendVectorSync().

|

static |

Getting Deflation computing group MPI_Comm.

Sending Vector with sync

| nTargetRank | Sending target rank |

| pVector | Sending buffer |

| nSize | Sending size |

| req | MPI_Request for MPI_Send |

| commWorld | MPI_Comm for sending data |

Definition at line 838 of file MPIManager.cpp.

References FREE_MEM, SendDoubleBufferSync(), and CMatrixOperation::CVector::Serialize().

Referenced by CLanczosMethod::MergeDegeneratedEigenvalues().

|

static |

Set MPI Enviroment.

| nRank | Current rank index |

| nTotalNode | Total rank count |

Definition at line 141 of file MPIManager.cpp.

References m_bStartMPI, m_nCurrentRank, and m_nTotalNode.

Referenced by InitLevel(), InitMPIEnv(), and CLanczosLaunching::LaunchingLanczos().

|

static |

Definition at line 894 of file MPIManager.cpp.

References GetCurrentRank().

Referenced by CSPLoop::executeSPLoop().

|

static |

Waiting recevinging double buffer sync function.

| req | MPI request parameter |

Definition at line 726 of file MPIManager.cpp.

References m_ReceiveDoubleAsyncRequest.

|

static |

Waiting sending double buffer sync function.

| req | MPI request parameter |

Definition at line 699 of file MPIManager.cpp.

References m_SendDoubleAsyncRequest.

|

staticprivate |

Flag for Multilevel MPI group.

Definition at line 105 of file MPIManager.h.

Referenced by InitLevel(), and IsMultiLevelMPI().

|

staticprivate |

|

staticprivate |

MPI_Init call or not.

Definition at line 88 of file MPIManager.h.

Referenced by FinalizeManager(), IsInMPIRoutine(), and SetMPIEnviroment().

|

staticprivate |

Deflation computing MPI_Comm.

Definition at line 101 of file MPIManager.h.

Referenced by FinalizeManager(), GatherEVFromDeflationGroup(), GatherEVIterationFromDeflationGroup(), GetDeflationComm(), GetEigenvalueCountFromDeflationGroup(), InitLevel(), and IsDeflationRoot().

|

staticprivate |

MPI Group for Deflation computation.

Definition at line 103 of file MPIManager.h.

Referenced by FinalizeManager(), and InitLevel().

|

staticprivate |

MPI Group for Lanczos computation.

Definition at line 102 of file MPIManager.h.

Referenced by FinalizeManager(), and InitLevel().

|

staticprivate |

Lanczos Method MPI_Comm.

Definition at line 100 of file MPIManager.h.

Referenced by AllReduceComlex(), AllReduceDouble(), Barrier(), BroadcastBool(), BroadcastDouble(), BroadcastInt(), BroadcastLanczosResult(), FinalizeManager(), GatherVDouble(), GatherVInt(), GetLanczosComputComm(), GetMPIComm(), InitLevel(), IsLanczosComputeRoot(), LoadBlancing(), MergeVector(), MergeVectorEx_Optimal(), MergeVectorOptimal(), ReceiveDoubleBufferSync(), and SendDoubleBufferSync().

|

staticprivate |

|

staticprivate |

MPI Rank.

Definition at line 85 of file MPIManager.h.

Referenced by FinalizeManager(), GetCurrentLoadBalanceCount(), GetCurrentRank(), MergeVectorEx_Optimal(), MergeVectorOptimal(), and SetMPIEnviroment().

|

staticprivate |

MPI Group index for Lanczos group.

Definition at line 104 of file MPIManager.h.

Referenced by GetLanczosGroupIndex(), and InitLevel().

|

staticprivate |

Definition at line 106 of file MPIManager.h.

Referenced by FinalizeManager(), InitCommunicationBufferMetric(), and LoadBlancing().

|

staticprivate |

MPI Level.

Definition at line 98 of file MPIManager.h.

|

staticprivate |

Total node count.

Definition at line 87 of file MPIManager.h.

Referenced by CheckDeflationNodeCount(), FinalizeManager(), GetLoadBalanceCount(), GetTotalNodeCount(), LoadBlancing(), MergeVectorEx_Optimal(), MergeVectorOptimal(), and SetMPIEnviroment().

|

staticprivate |

After MPI Split bank infomation.

Definition at line 94 of file MPIManager.h.

Referenced by FinalizeManager().

|

staticprivate |

Data buffer for MPI Communication.

Definition at line 90 of file MPIManager.h.

|

staticprivate |

Data buffer for Vector converting.

Definition at line 91 of file MPIManager.h.

|

staticprivate |

Request for receving double.

Definition at line 97 of file MPIManager.h.

Referenced by WaitReceiveDoubleBufferAsync().

|

staticprivate |

Request for sending double.

Definition at line 96 of file MPIManager.h.

Referenced by WaitSendDoubleBufferSync().

|

staticprivate |

Displ for MPI comminication.

Definition at line 95 of file MPIManager.h.

Referenced by FinalizeManager(), InitCommunicationBufferMetric(), MergeVector(), MergeVectorEx_Optimal(), and MergeVectorOptimal().

|

staticprivate |

Load blancing for MPI Communication.

Definition at line 89 of file MPIManager.h.

Referenced by FinalizeManager(), GetCurrentLoadBalanceCount(), GetLoadBalanceCount(), and LoadBlancing().

|

staticprivate |

Reciving count variable for MPI comminication.

Definition at line 92 of file MPIManager.h.

Referenced by FinalizeManager(), InitCommunicationBufferMetric(), MergeVector(), MergeVectorEx_Optimal(), and MergeVectorOptimal().

|

staticprivate |

Sending count variable for MPI comminication.

Definition at line 93 of file MPIManager.h.

Referenced by FinalizeManager(), and InitCommunicationBufferMetric().